Most maintenance teams already rely on well-established routines. These exist in the form of checklists & inspection sheets, spreadsheets, or paper-based workflows that guide daily operations on the ground.

However, the moment organisations begin thinking about digitising maintenance, a critical question emerges:

Can our real maintenance checklists actually work inside a digital system without breaking the way we operate today?

This is where many evaluations fall short. Software is often tested using simplified demos or generic examples that do not reflect real operational complexity things like conditional logic, safety approvals, asset-specific variations, and field-level constraints.

As a result, teams may choose a system that looks effective in theory but fails to support real-world execution.

Testing maintenance checklists on real data solves this gap. Instead of imagining how workflows might behave, you evaluate how your actual routines perform inside a structured digital environment. This gives a clear, practical view of what improves, what breaks, and what needs refinement before going digital.

In this guide, we’ll explore how to test maintenance checklists using real operational data, what insights to look for, and how this approach leads to better CMMS decisions, including platforms like Makula.

Why this matters (industry reality check)

In maintenance operations, the gap between “planned process” and “real execution” is often larger than expected.

Here are a few common industry observations:

The problem is not the checklist itself.

It is how well it performs when moved into a structured system.

Why generic software demos fail

Most software demonstrations are designed to show simplicity.

But maintenance work is not simple.

Common gaps in standard demos:

This creates a risk:

You evaluate the software based on an ideal scenario, not your actual operations.

What does “testing checklists on real data” actually mean?

It means evaluating a system using your real operational structure:

- Existing inspection forms

- Actual maintenance routines

- Real asset categories

- Your current fault codes

- Your real approval steps

Instead of asking:

“Can this software do maintenance?”

You ask:

“Can this software handle our maintenance process exactly as it exists today?”

Example: Real checklist transformation

Here’s how a typical maintenance checklist changes when tested in a digital environment:

Before vs After Structure

This is where inefficiencies become visible.

What teams usually discover

When maintenance checklists are tested on real data, teams often uncover hidden workflow problems.

Common findings:

- 25–40% of fields are rarely used

- Duplicate steps exist across different checklists

- Mobile usability issues (too much typing)

- Missing standard fault codes

- Overly complex approval chains

- Inconsistent naming across assets

These are not software problems, they are process visibility problems.

The “hidden ROI” of testing real checklists

Before digitisation, most teams underestimate how much inefficiency exists in their workflows.

Here is a realistic impact breakdown:

Even small improvements compound quickly at scale.

Why familiarity improves adoption

One of the biggest reasons software rollouts fail is user resistance.

Technicians do not reject software, they reject unfamiliar processes.

When teams see their own checklists inside a structured system:

- Training time drops

- Resistance decreases

- Errors reduce

- Adoption becomes natural

Familiarity is a key success factor in digital transformation.

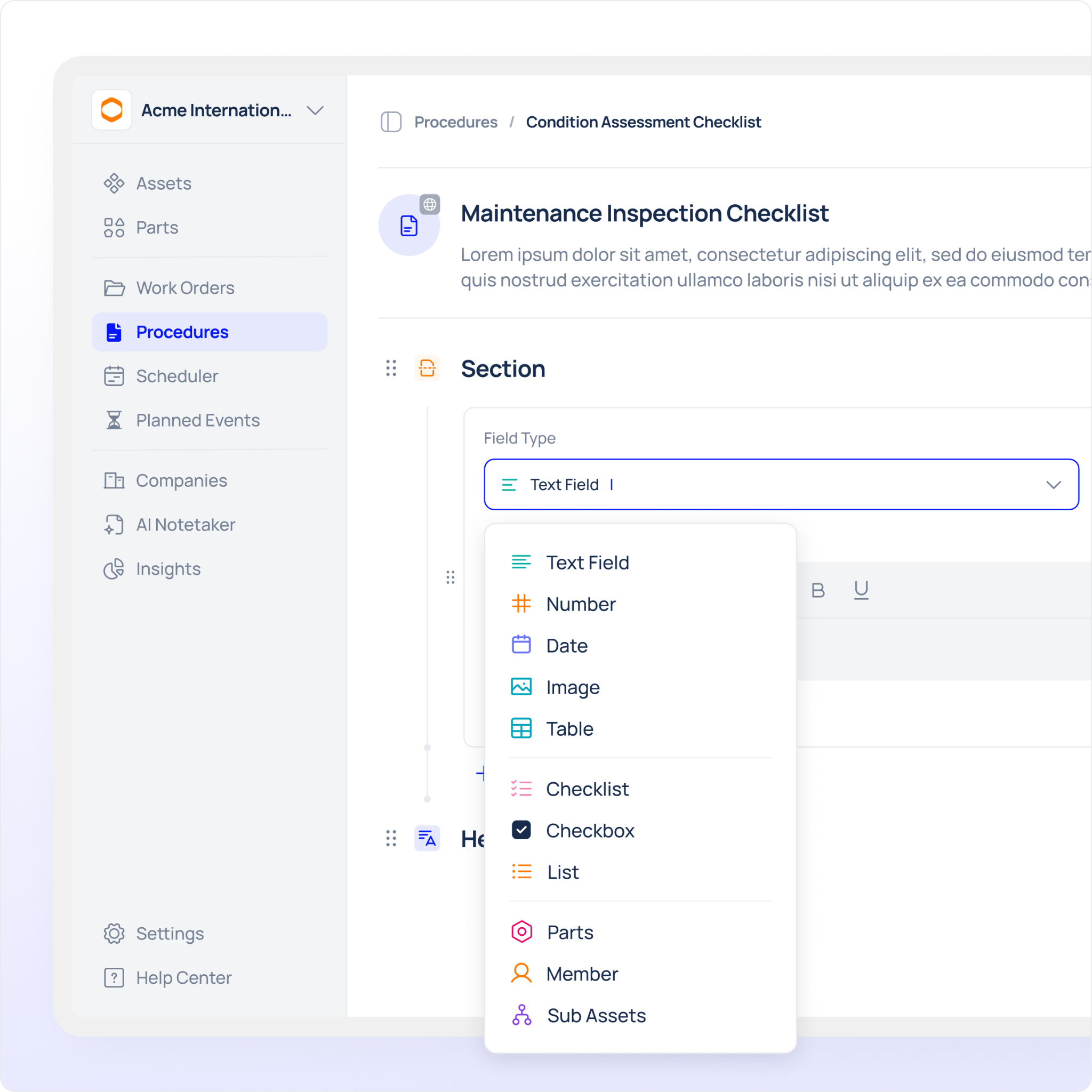

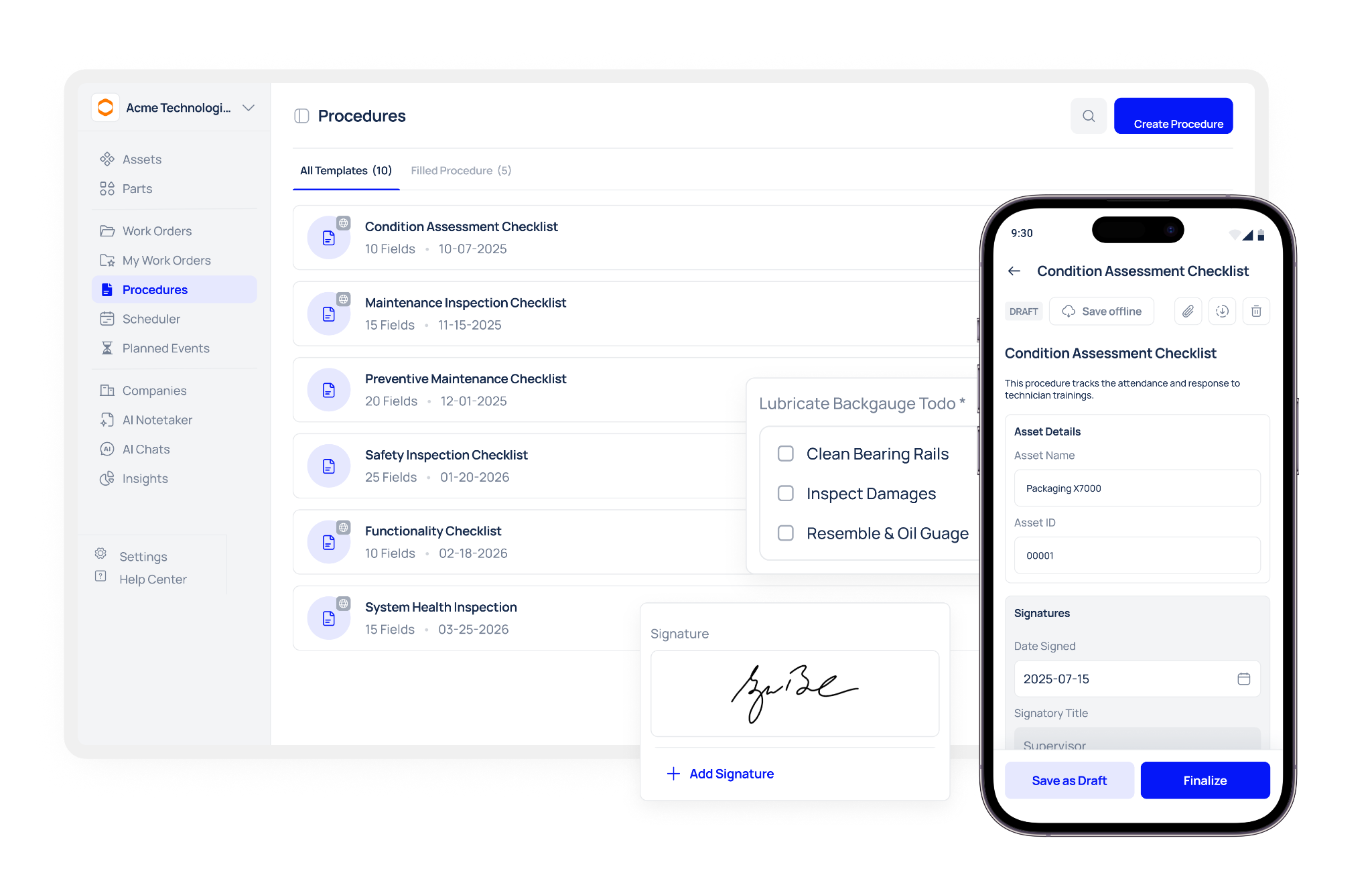

How Makula CMMS fits into this approach

Makula CMMS helps maintenance teams structure and standardise their existing workflows.

Instead of forcing teams to redesign everything from scratch, it allows:

- Existing checklists to be mapped into structured workflows

- Maintenance routines to be centralised

- Data consistency across assets and teams

- Better visibility of inspection history

This makes it easier to evaluate how real operational data behaves inside a CMMS environment.

What to evaluate when testing your checklists

Use this checklist when reviewing your workflows:

Checklist evaluation guide

Common mistakes teams make

When testing maintenance checklists, teams often:

- Focus only on UI instead of workflow logic

- Ignore mobile usability

- Test only simple tasks (not complex ones)

- Overlook data structure quality

- Assume digitisation automatically improves processes

The real goal is process validation, not software evaluation.

Final takeaway

Testing maintenance checklists on real data is not about software, it is about understanding your own operational reality.

It helps answer three key questions:

- Does your workflow actually make sense?

- Where are the inefficiencies hiding?

- What will break when you digitise it?

Once you have clarity, moving to a CMMS like Makula becomes a structured decision, not a risky guess.

.webp)